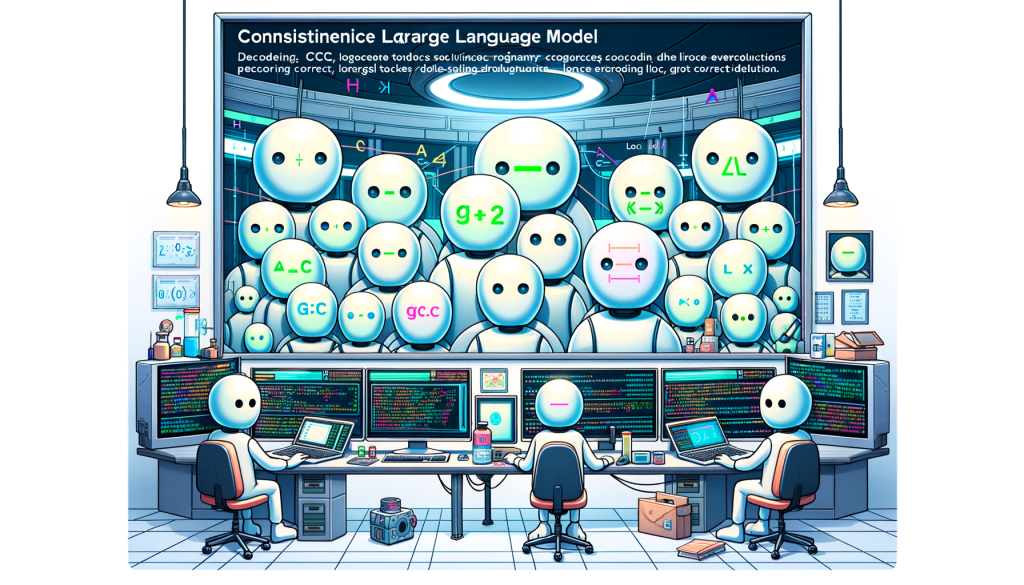

The document introduces Consistency Large Language Models (CLLMs), a new family of parallel decoders that can efficiently decode an n-token sequence per inference step, reducing latency. It explains that CLLMs are trained to perform parallel decoding by mapping any randomly initialized n-token sequence to the same result yielded by autoregressive (AR) decoding in as few steps as possible. The proposed method shows significant improvements in generation speed, comparable to other fast inference techniques like Medusa2 and Eagle, without requiring additional memory cost. The Jacobi decoding method is discussed, which transforms the sequential generation process into a system of n non-linear equations solvable in parallel. The document also details the training process for CLLMs, including global consistency (GC) loss, local consistency (LC) loss, and traditional AR loss. It highlights that CLLMs achieve significant speedup in specialized domains and open-domain conversational challenges, with moderate fine-tuning costs. Additionally, CLLMs exhibit the capability of predicting correct tokens preemptively and acquire proficiency in numerous collocations through the consistency generation objective.