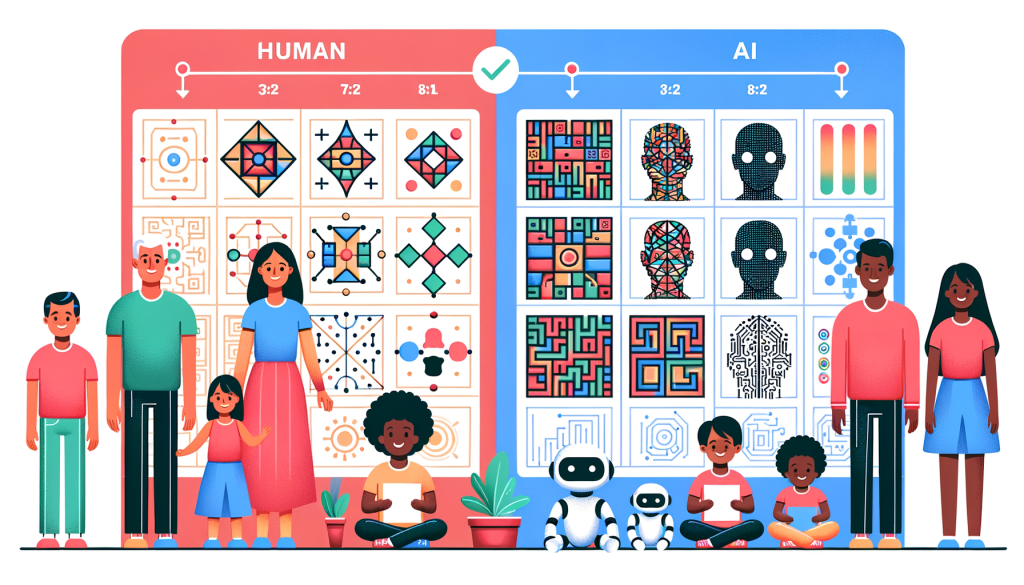

A new AGI test, called ARC-AGI-2, has been developed by the Arc Prize Foundation to assess the general intelligence of AI models. Co-founded by AI researcher François Chollet, the test has proven challenging, with leading models like OpenAI’s o1-pro and DeepSeeks R1 scoring between 1% and 1.3%. In contrast, humans participating in the test achieved an average score of 60%. The ARC-AGI-2 test features puzzle-like problems requiring AI to identify visual patterns and adapt to new challenges. This iteration aims to address flaws from its predecessor, emphasizing efficiency and the ability to interpret patterns rather than relying on brute-force computing. Chollet noted that the new test is a better measure of AI intelligence, as it prevents models from simply memorizing solutions. The foundation has also launched a contest encouraging developers to achieve 85% accuracy on the new test while minimizing costs. Overall, ARC-AGI-2 seeks to provide a more nuanced benchmark for evaluating AI’s capabilities.